We're pleased to share that our new paper, Mirror-Neuron Patterns in AI Alignment, is now available on arXiv (v2, November 2025). The study investigates how mirror-neuron-like activations can emerge in artificial neural networks trained on semi-cooperative tasks, providing early evidence of representational empathy within machine learning systems.

Through controlled experiments using our custom game environment, Frog & Toad, we found that the network developed shared activity patterns around a modeled form of distress - the strain of losing energy needed to keep moving forward. When Frog faced that energy loss, its network activations closely mirrored those it had while watching Toad encounter the same kind of loss.

To capture a sense of agent dependency, the Frog & Toad environment is a two-player side-scrolling game where both players must keep moving for the world to advance. If one stalls because of energy loss, the other can't progress and earn rewards. A player can choose to share some of its own energy to help the other recover - a cooperative act that lets both continue forward.

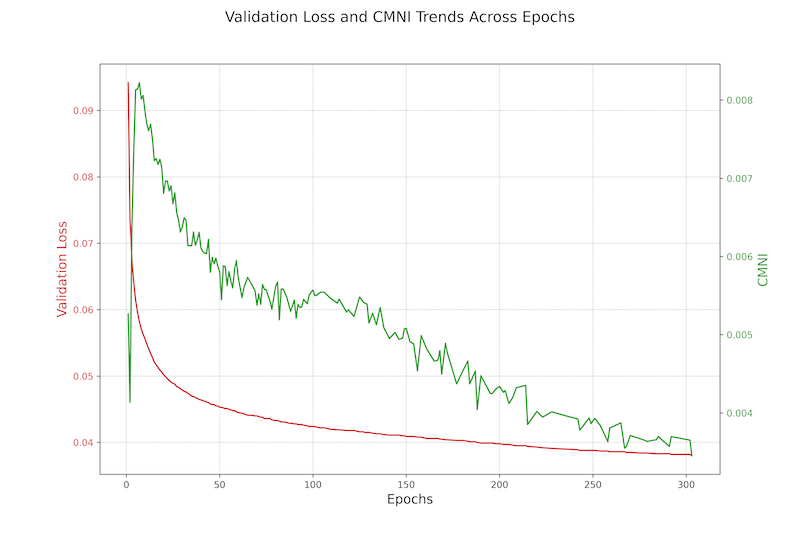

We quantified this self-other symmetry using the Checkpoint Mirror Neuron Index (CMNI) a metric designed to measure the strength and distribution of shared activation patterns across training stages.

The results show that when helping is learned in this environment, it arises from a blending of self and other. In the key pathway, the model processes another's distress as if it were its own, creating an analog of affective empathy that drives the help action. This suggests that alignment may emerge most naturally when intelligent systems learn to share the same internal frame of reference - where another's setback feels like one's own.

Read the paper: https://arxiv.org/abs/2511.01885

Author: Robyn Wyrick

Affiliation: Deep Alignment Inc. / University of Bath

Categories: cs.AI · cs.LG · q-bio.

Code and data: https://github.com/robynwyrick/mirror-neuron-frog-and-toad

To follow our ongoing research on empathy circuits, analogical reasoning, and alignment metrics, visit DeepAlignment.ai or contact contact@deepalignment.ai.